NYU Researchers Make Breakthrough in Russian Bot Detection

January 29, 2018

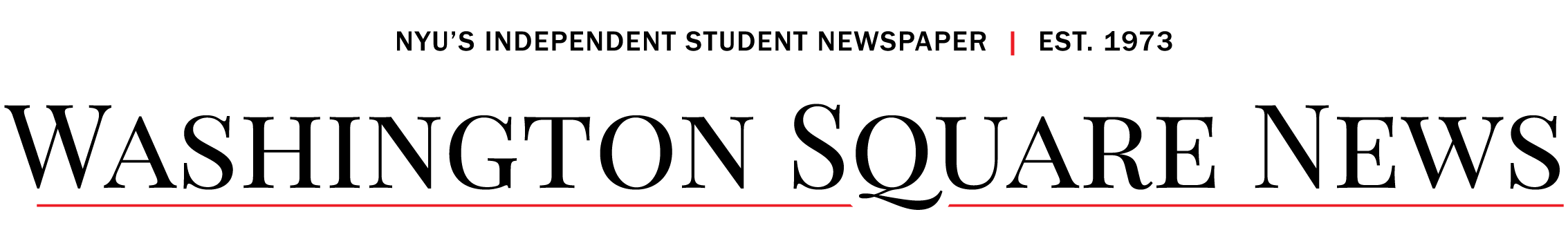

Bots, trolls, misinformation. These terms have recently slipped their way into the lexicon of nearly all political reporting. Just six days into the start of 2017 — and 14 days before President Donald Trump’s inauguration, a 25-page unclassified report by the Director of National Intelligence accused the Russian government of engaging in a sustained misinformation campaign aimed at influencing the 2016 United Staes presidential elections.

Officials from the French, British and German governments have since presented their own accounts of Russian interference. The DNI report, which cited investigations by the CIA, FBI and National Security Agency, claims that Russian actors used state sponsored media outlets, paid human trolls and weaponized swarms of automated bots to disseminate misinformation and pro-Kremlin propaganda. The report concludes with, “high confidence,” that the objective of this campaign was to aid Trump on his path to the White House.

Recently, a team of NYU professors and graduate students at the Social Media and Political Participation Lab released a paper in the peer-reviewed journal Big Data. The paper provided commentators and policy makers with breakthrough insights into how governments use bots — a software application used to automate tasks online —to alter narratives and ultimately weaken political opposition online. Though the team began looking at bots years before the 2017 findings by U.S. intelligence agencies, the findings are relevant. The discoveries required the recruitment of over 50 Russian undergraduate coders who spent 15 weeks analyzing political posts written in Russian on Twitter between 2014 and 2015. Their assessment found that on any given day, over 50 percent of political tweets written in Russian were produced by bots. According to co-author and a doctoral candidate in NYU’s Wilf Family Department of Politics, Denis Stukal, this number on some days was as high as 80 percent.

The NYU team, led by Professor of Politics Joshua Tucker, began analyzing Twitter posts written in Russian in 2015. Co-author and Wilf Family Department of politics Ph.D student Sergey Sanovich said that social scientists in the past largely saw the social media site as a liberating technology.

“[In 2014] social media was thought of mainly as a ‘liberation technology,’” Stukal said in an interview with WSN. “[It was] a tool to liberalize and democratize societies.”

Indeed, the ability and ease, with which social media sites like Twitter allow for activists and protesters to mobilize and export their stories across the world, played a critical role in the 2011 Egyptian revolution. Hundreds of thousands of protesters in Tunisia and Yemen followed suit, utilizing hashtags and Facebook events to spark the the explosive Arab Spring.

Since then, however, autocratic regimes have learned to utilize these technologies for their own benefit. Tucker, Sanovich and Stukal are preparing to release a follow up paper in the journal Comparative Politics and are interested in how autocratic regimes like Russia use these technologies to target dissent.

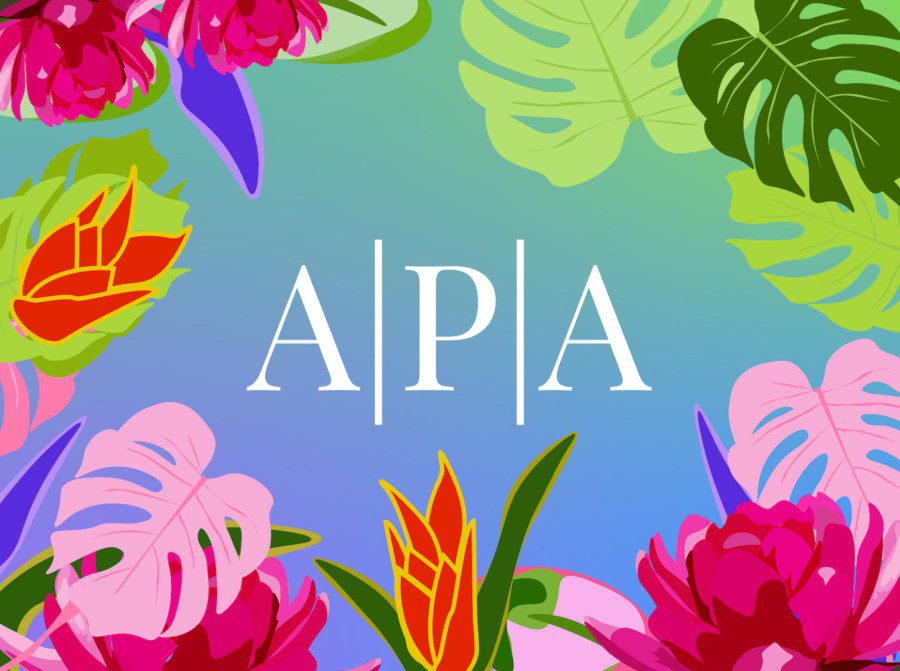

“We were interested in a larger project of how authoritarian regimes respond to online opposition,” Tucker said.

Tucker said these regimes can respond to online opposition in three general ways. They can respond offline by changing legal rules, libel laws or even by by taking over entire social media companies. Regimes can also respond by implementing online restrictions — what one would commonly identify as internet censorship. Tucker pointed to the Chinese government’s “Great Firewall” as an example of online restriction. Finally, regimes can choose to engage with opposition online. With this method, rather than attempting to restrict opposition content, the regime may instead attempt to alter what users see online not be removing content but by adding it. In the case of Russia, according to the writers, this is achieved largely through online bots and trolls.

While the scope of the NYU team’s research was restricted to Russian-speaking Twitter, instances of alleged Russian interference have occured on an international stage.

The effect of this targeted misinformation could be felt close to NYU. Immediately following the 2016 election, over 16,000 people, including some NYU students, registered for a Facebook event titled, “Not My President.” Protesters attending the event marched through lower Manhattan, expressing their displeasure with the election results. According to a report by Motherboard, however, the groups organizer, BlackMattersUS, was actually run by manipulators with ties to the Russian government. The group has since been banned on Facebook. These anti-Trump protesters had been made pawns in a Russian political interference campaign.

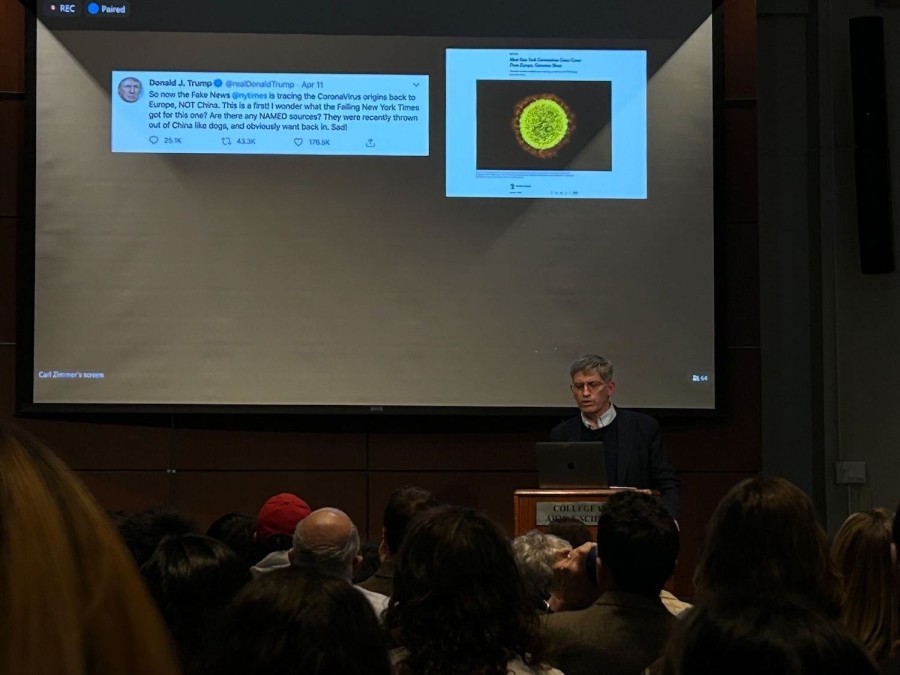

A Facebook event at Union Square in 2017 was part of a Russian misinformation campaign.

While several similar real world events have occurred throughout the country since, the true frontier of misinformation, according to this report, lies online through bots and trolls.

Though many of the bots detected in the research featured clear pro-Kremlin indicators, they were by no means the only form of bots. While a plurality of the bots retweeted or posted content favorable to the Russian regime, some opposed the government.

“Some of the bots were opposition bots,” Tucker said. “Some were pro-Ukraine and some of them were just neutral. They were neither pro or anti anybody. There are lots of neutral bots that seem to be doing little more than tweeting news headlines.”

Pro-Kremlin bots made up a plurality of the bots, but were not the majority.

According to Sanovich, it is also possible that a portion of the pro-Ukrainian opposition bots could actually be deceptive pro-Russian software made to appear more radical than they actually are.

The bots in question are far from uniform. While some bots are relatively easy to detect at the consumer level, some are designed more meticulously. Others still fall under a category which Sanovich calls, “Cyborgs,” automated accounts that are periodically used by humans to fool Twitter bot detection algorithms.

For the average Twitter user, Stukal pointed to four key characteristics that may help identify a bot.

(1) Unlike human accounts, many rudimentary bots may have a long sequence of numbers where a name should be.

(2) An account that follows many people but does not have many followers may be a bot.

(3) If a user tweets very often this may be the indication of a bot.

(4) While humans mostly tweet from mobile devices, bots usually tweet from other third-party software programs like TweetDeck.

Though the bots analyzed in this case study were restricted to strictly the Russian Twitter sphere, the finding illuminates an issue with social media worldwide. In the U.S., internet-based based media companies and aspiring online celebrities, have began purchasing their own computer followers. This bot-supported economy of clicks presents some dangers.

According to Stukal and Sanovich, the solutions for this current bombardment of online bots may need to begin with Twitter. Though Twitter already uses its own algorithm to detect and ban bots, an alarming amount have fallen through the cracks.

Though Sanovich agreed that Twitter could be doing more to detect bots, he also conceded that abuse of the technology in some form is inevitable. He suggested that complex algorithms alone may not be enough. In the case of Twitter and Facebook, Sanovich said that these firms should employ more people with an understanding of the local language and country.

“You need people who understand the context,” Sanovich said.

A version of this article appeared in the Monday, Jan. 29 print edition. Email Mack DeGeurin at [email protected].

Joan iovino • Feb 11, 2018 at 2:25 am

RANT: Confessions of a “Russian Bot”.

My name is Joan Hunter iovino, and I am a “Russian Bot”.

I WAS a staunch Democrat for 16 years. I believed strongly in voting for the lesser evil, and resented anyone who didn’t. I voted a straight blue ticket every time. I voted in every primary. I was also a dedicated volunteer on many, many Democratic campaigns.

Over the years, I became increasingly disillusioned with the party, the candidates they elevated, and the ones I saw them purposely crush. A sense of rage and betrayal continued to rise in me as I watched Democrats in each branch kowtow to corporate interests, vote for imperial conquests, vote for Draconian “security” measures, refuse to stand up for any of the issues I cared about, and ultimately do nothing substantial to oppose the right wing.

By 2012 I had already vowed never to support another Democrat, I voted Green for the first time. Then in 2016 Bernie Sanders came along, and it drew me back to the party. I worked full-time on that campaign in organizing and voter outreach.

The things I witnessed the Democratic establishment do with my own eyes and from the testimony of the voters I worked with, horrified even me, and I already knew they were corrupt and underhanded. They blatantly sabotaged Sanders’campaign, and they went so far as to deprive legitimate, registered voters of their right to vote. They didn’t just toy with subverting democracy, they broke laws REPEATEDLY, and no one did a DAMN THING about it. Now we have Trump. And they want to blame ME. AND THOSE VOTERS I WORKED WITH THAT THEY CHEATED! AND ceaselessly point at #Russia rather than address their own THOROUGH corruption.

Trump is despicable, but the DEMOCRATS are not the #resistance. People need to hear my story, but instead of listening to my testimony, they just scream that I’m a Russian Bot. It’s beyond adding insult to injury.

If I’m a “Russian bot”, it’s because the Democrats made me one. Russian interference didn’t sour me on establishment Democrats. The party itself and their decades of CORRUPTION malfeasance DID.

Dismiss me if you like, but I’ve come to you with a warning, and if you don’t listen, we are all going down a very dark path.

Don’t run into the arms of the Democrats and these “intelligence agencies” thinking they will save us from Trump. That kind of short-sightedness is beyond foolish.